Authors: Jonathan Walberg, Noah Reed, & Ethan Connell

On March 15th, 2026, Politico published an article titled “Taiwan reports large-scale Chinese military aircraft presence near island.”[i] This title exaggerated what was in actuality a relatively normal day of PLA activity around Taiwan. Nevertheless, the piece caught the eye of many observers on social media. Within hours, thousands of finance and Open-Source Intelligence (OSINT) accounts began to regurgitate the headline, without linking the article or explaining the nuance behind the report of “large-scale” activity.[ii] These posts, which seemed to imply that China was preparing significant military action against Taiwan, accumulated tens of thousands of likes, and began trending on both Twitter/X and Bluesky.

Essentially, we witnessed a game of telephone taking place on the internet. A single headline was rapidly shared, rephrased, and simplified across platforms, with each iteration shedding context and adding interpretation. Within hours, an observation about aircraft activity became a claim about encirclement, with accounts sharing posts that declared Taiwan as “surrounded” by the People’s Liberation Army (PLA).

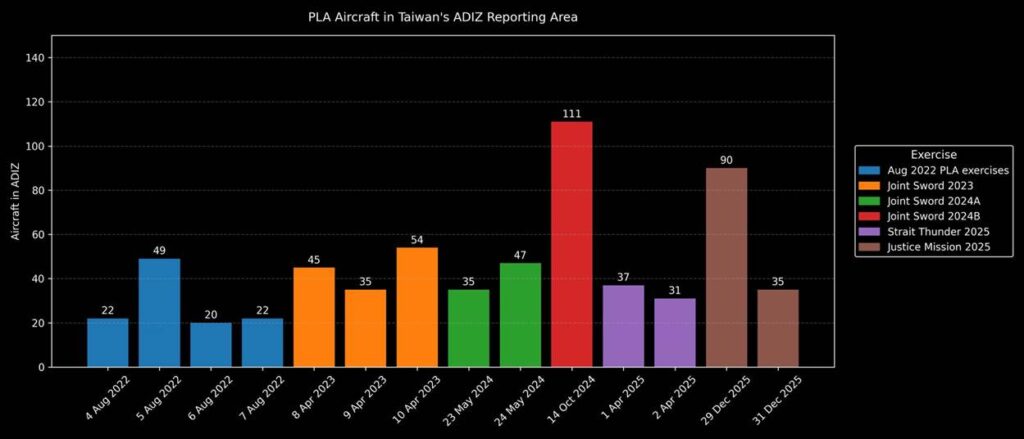

This incident provides a useful window into how even relatively small actions by the PLA around Taiwan have the potential to significantly swing social media coverage of Taiwan by actors engaged in disinformation. In a crisis-prone environment such as Taiwan’s, where China has carefully shaped the narrative environment for years through large exercises, even one article can cascade into a broader wave of false and misleading claims, using recycled visuals and improvised escalation narratives.[iii]

The Problem With ADIZ Reporting

Following two weeks of depressed PLA activity in and around Taiwan’s Air Defense Identification Zone (ADIZ), Taiwan’s MND reported that 26 Chinese aircraft were detected operating around Taiwan, with 16 aircraft entering its IZ[iv]. While this instance is indeed above 2026’s daily average of 4.5, it is in fact only “large-scale” if compared to the previous two weeks of little to no activity, something that the Politico article make[v] clear. However, when viewed holistically, March 14th’s numbers are less significant, representing only the 8th largest ADIZ incursion of 2026. The event’s significance is further diminished due to the resumption of low aerial activity the following day, March 15th.[vi]

It is routine for news organizations outside Taiwan to report on PLA activity in Taiwan’s ADIZ, especially when violation numbers seem to be abnormally high or low. During the recent period of lower activity, for example, many major news organizations published stories on the unusual lull.[vii]

Reporting on something as niche as Taiwan’s ADIZ creates a structural vulnerability. Headlines often compress complex operational data into simplified, attention-grabbing phrases that lack important context. On social media, these headlines are frequently detached from the underlying reporting, leaving readers to infer meaning from incomplete signals.

This dynamic does not require deliberate manipulation. Headline framing can make routine activity appear more consequential than it is, creating openings for exaggerated interpretations. A similar pattern appears during PLA joint exercises, when maps of exercise zones or footage of missile launches circulate without context, prompting observers to interpret routine demonstrations as evidence of blockade preparations or imminent invasion.

The Misinformation Cascade Begins

The cascade began with a flurry of financial news accounts simply sharing the article title on social media, something they likely received from news wire services. The simplicity of the title, specifically the fact it could very easily be misconstrued as suggesting that China was preparing a military build-up, instantly attracted massive attention from OSINT accounts that simply aggregate the news headlines, usually in a way that exaggerates the severity of events.[viii]

What began as “Taiwan reports large-scale Chinese military aircraft presence near island” became “TAIWAN DETECTS MASSIVE CHINESE MILITARY PRESENCE SURROUNDING ISLAND” and “BREAKING; TAIWAN ON HIGH ALERT.”[ix] As these posts began to circulate, generating tens of thousands of likes and reposts, commentary accounts began to post uninformed analysis.[x] This fed into the algorithm and expanded the audience to circles outside of the “OSINT community”. Less than six hours after the publication of the original Politico article, the dominant discourse surrounding it became completely unrecognizable from its actual substance.

As the social media posts spread rapidly, some posts referencing the Politico report began to adopt the phrase “Taiwan surrounded.”[xi]

This linguistic shift was not trivial. Describing aircraft and ships “around Taiwan” conveys an operational snapshot: an observation about detected activity. Describing Taiwan as “surrounded,” however, implies a fundamentally different military posture. The term suggests physical enclosure, coercive leverage, or even the early stages of blockade operations. The difference between these descriptions marks the point where an activity report becomes a strategic claim.

In many viral posts, the progression followed a familiar pattern: The original numbers were omitted, but the language of heightened military activity and encirclement remained. As the messaging grew stronger, the belief that we were seeing something larger beginning grew as a seemingly logical conclusion. At that moment, the misinformation cascade began.

The initial spread of the Politico headline was driven by rapid reposting across financial news and OSINT accounts, many of which likely received the story through news wire services. Each repost preserved the sense of urgency while shedding the context needed to interpret the underlying activity.

But repetition alone does not explain why the narrative gained traction. Ongoing global events, emotional responses such as fear, and the pressure to keep pace with breaking news all contributed to how the headline was interpreted. Together, these forces helped transform an activity report into something that appeared far more consequential.

Context Collapse and the Vulnerability of Breaking News

This transformation illustrates a recurring problem in the information environment surrounding security crises: context collapse.[xii] Technical military reporting often relies on specialized terminology, whose meaning depends heavily on operational context. As a result, phrases like “large-scale activity,” “operating near the island,” or “around Taiwan” can be easily misinterpreted when removed from the operational reporting framework used by defense institutions. This is especially relevant during the ongoing operations in the Middle East. With airstrikes, bombings, and naval fires filling up algorithms on social media, the public is hyper fixated on action, worried about the conflict spilling over with global consequences.

On media platforms that reward simplicity and emotional clarity, those phrases can quickly evolve into stronger claims. A surge becomes an escalation. Activity becomes encirclement. A snapshot becomes a strategic turning point.

Research on crisis communication shows that information environments characterized by uncertainty and urgency often degrade shared situational awareness.[xiii] In these environments, audiences rely increasingly on simplified narratives rather than technical explanations, making complex military developments easier to misinterpret or exaggerate.[xiv]

The Taiwan Strait is particularly vulnerable to this dynamic because even routine military movements carry geopolitical significance. For audiences with limited familiarity with PLA operational patterns, the difference between an activity spike and a strategic shift may not be obvious. As a result, ambiguity can easily be filled with worst-case interpretations.

Fear as a Carrier of Misinformation

One of the major factors fueling the spread of discussion around the activity was a sense of urgency and fear. What had begun as a description of aircraft counts was quickly processed as a signal of possible escalation. This shift mattered because it changed the role of the audience. Rather than evaluating a technical report, users were reacting to what appeared to be the early stages of a crisis.

Fear alters how individuals process information under uncertainty. Research on information diffusion shows that emotionally arousing content, particularly content that evokes anxiety, reduces the likelihood that individuals will pause to verify claims before sharing them.[xv] When the perceived stakes are high, the cost of inaction can feel greater than the risk of being wrong.[xvi] In this context, sharing becomes a precautionary behavior: a way of responding to a potential threat rather than confirming a verified fact.

This dynamic helps explain how the narrative evolved so quickly. As users encountered repeated claims that Taiwan was being “surrounded,” the framing itself encouraged a particular interpretation of events, one in which time was limited and escalation plausible. Under those conditions, ambiguity is often resolved in the direction of worst-case assumptions.

The Taiwan Strait is especially susceptible to this process because it is already widely understood as a high-risk flashpoint.[xvii] Reports of increased military activity therefore do not enter a neutral information environment. They are received by audiences primed to expect crisis, making emotionally charged interpretations more intuitive and more difficult to dislodge.

Rather than distorting an already existing stable understanding of events, in this case, fear constructed a distorted understanding of events from first principles.

As the narrative spread, emotional reactions reinforced the interpretation that something larger was unfolding, even though the underlying data had not changed. By the time more precise context emerged, the initial framing had already taken hold.

The “Use It or Lose It” Logic of Virality

The initial posts that circulated widely were not detailed analyses, but rapid reposts of the headline, often stripped of its original context. As the story began to trend, users encountered a familiar dilemma: whether to wait for additional information or to share immediately while the topic was gaining attention. In fast-moving situations, that window can close quickly. Waiting to verify information risks missing the moment when a story is most visible.

This dynamic creates what can be understood as a “use it or lose it” logic. Information is most valuable when it is new and circulating widely. As a result, users are incentivized to share content as soon as they encounter it, even if the underlying details remain unclear. In the case of the Taiwan activity report, this pressure contributed to the rapid spread of simplified and, at times, misleading interpretations of the original article.

Research on information diffusion suggests that time pressure plays a significant role in reducing verification behavior. When individuals are required to make quick decisions about whether to share content, they rely more heavily on heuristic cues, such as the tone of a headline or the apparent urgency of a claim, rather than engaging in careful evaluation.[xviii] When the valence of the emotions is negative (fear or anger), and the arousal stronger, we see an even larger effect, effectively bypassing internal ’factchecking’ mechanisms. In practice, this means that speed can substitute for accuracy in shaping what information circulates most widely.

In the hours following the Politico report, this mechanism was visible in how the story evolved. As more users shared increasingly simplified versions of the original claim, the narrative moved further away from the underlying data. Each iteration prioritized immediacy over precision, reinforcing a version of events that was easier to transmit but less accurate.

Importantly, this process does not require intentional deception. The users participating in the spread of the narrative are often responding rationally to platform incentives that reward speed, visibility, and engagement. The result, however, is an information environment in which early interpretations, rather than verified ones, play a disproportionate role in shaping collective understanding.

Visual Misinformation and the Power of the Map

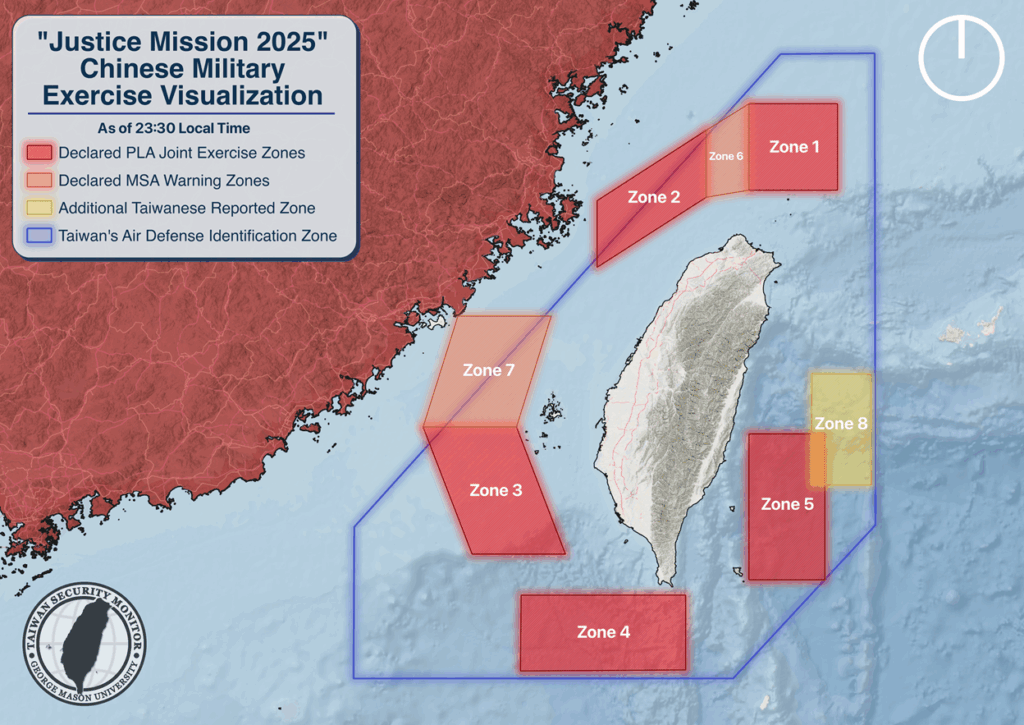

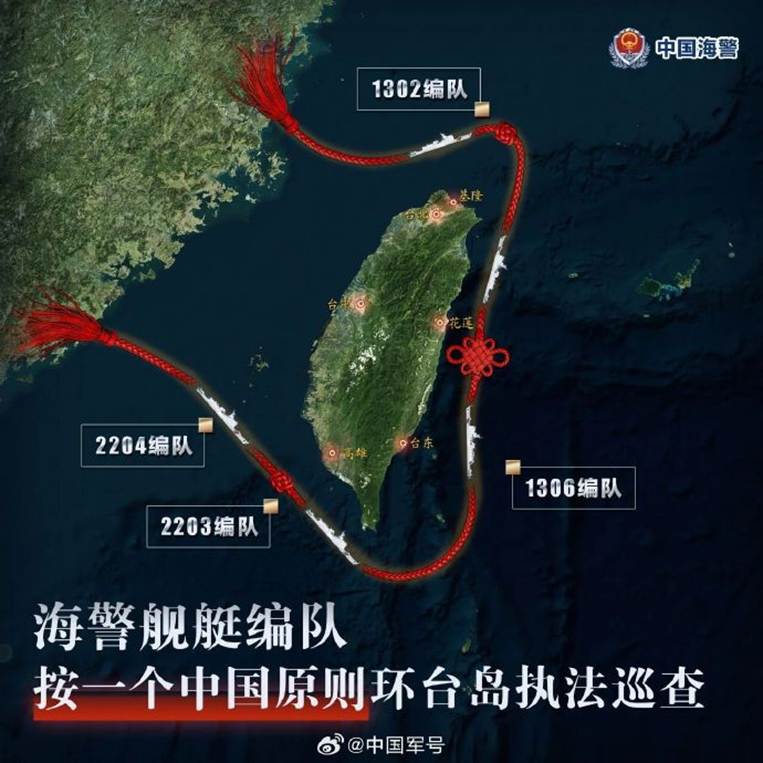

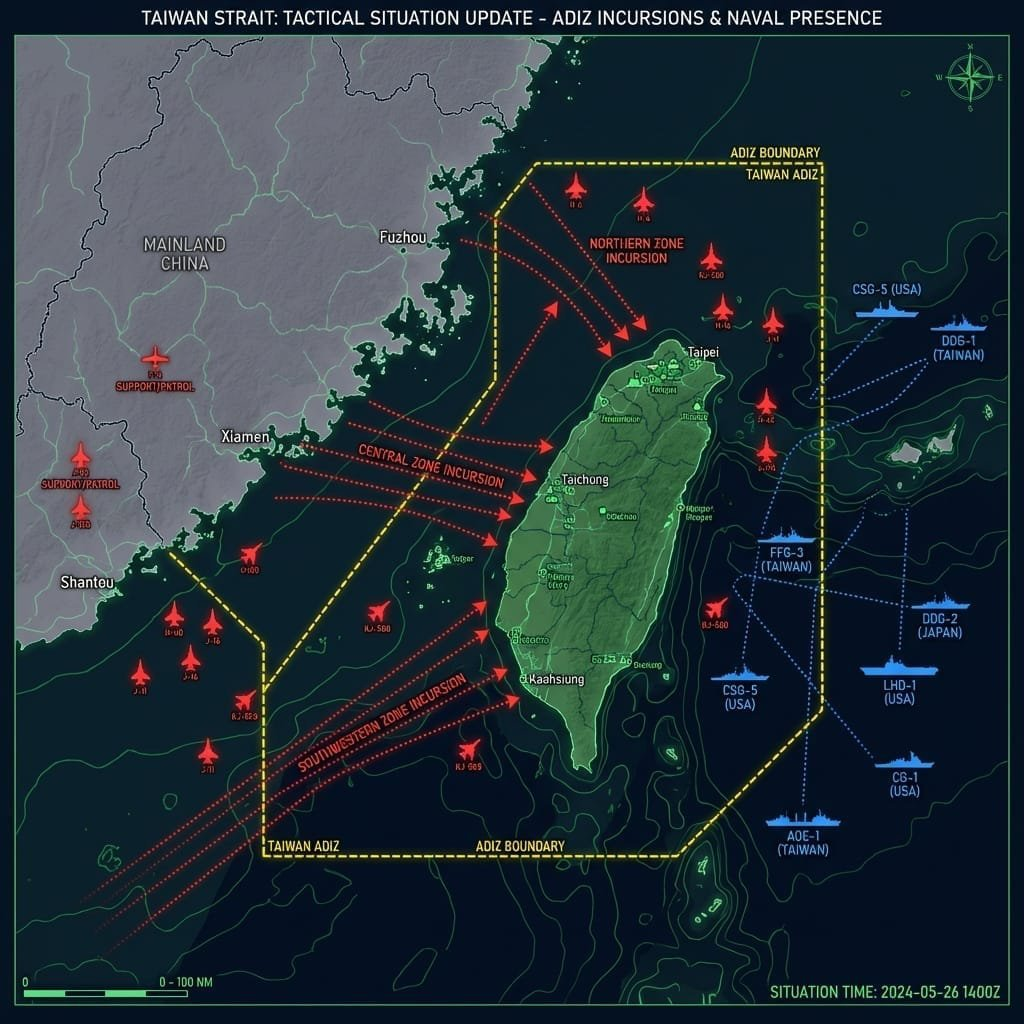

The misinformation surge surrounding the Taiwan activity report was further amplified by the circulation of a misleading map.

Images carry a particular authority online. A map, diagram, or chart often appears more credible than text because it looks technical and objective. For many readers, visual representation functions as evidence rather than interpretation.[xix]

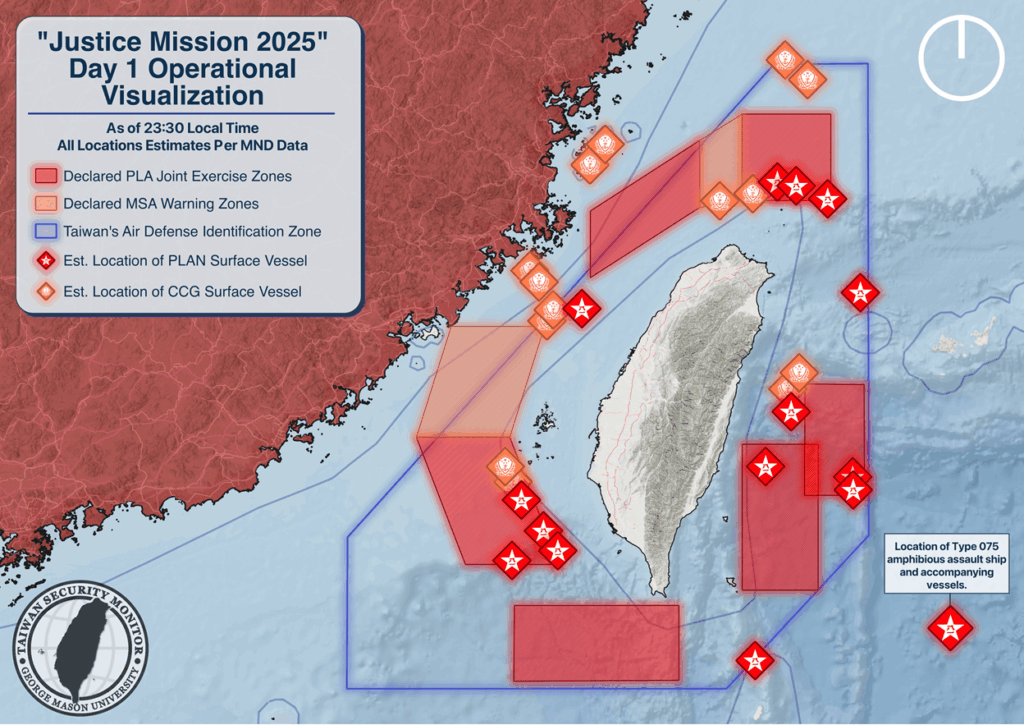

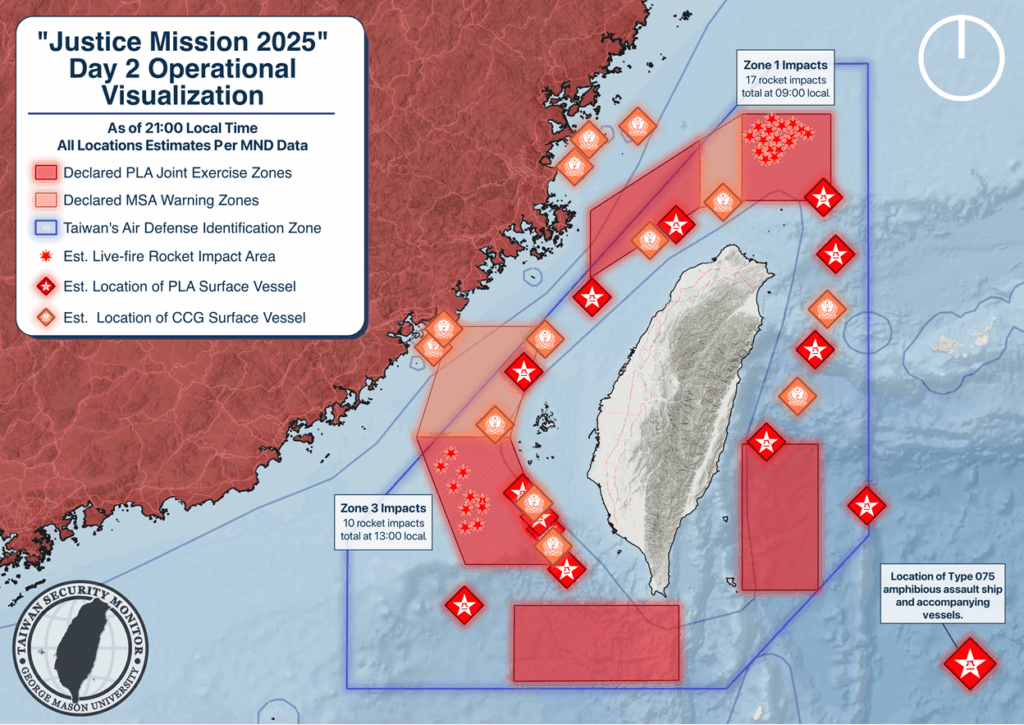

In this case, users circulated a map that purported to show Taiwan surrounded by Chinese forces. The image appeared to provide visual confirmation of the encirclement narrative.[xx] However, the map was not current, instead containing information from a Chinese exercise in May of 2024 (Joint Sword 2024A).

Despite this discrepancy, the image spread widely because it aligned with the narrative already circulating online. Once paired with the phrase “Taiwan surrounded,” the map helped transform a contested claim into something that looked authoritative.

This illustrates the powerful role visual content plays in misinformation ecosystems. Textual claims invite debate. Images often suppress it.

Why Taiwan Is Particularly Vulnerable

The Taiwan information environment is especially susceptible to rapid misinformation cascades due to several reinforcing factors.

First, China’s activity around Taiwan is both real and visible. The PLA regularly conducts air and naval exercises that simulate encirclement. Because these activities are genuine, reports about them carry immediate credibility and are easily incorporated into alarmist interpretations. Because these activities are genuine, reports about them easily gain traction.

Second, many audiences lack the context needed to interpret these developments. Without familiarity with PLA operational patterns, even routine activity can appear extraordinary.

Third, these factors combine with a media environment that rewards simplification. With the Taiwan Strait being a flashpoint that many fixate on, often viewed through the lens of great-power rivalry, social media platforms reward provocative messaging. Complex operational data rarely goes viral; emotionally resonant narratives do.

Together, these factors create what might be described as a fear market: where worst-case interpretations consistently attract attention and engagement.

The Anatomy of a Misinformation Cascade

The episode surrounding the “Taiwan surrounded” narrative illustrates a broader pattern in contemporary disinformation dynamics.

The process often follows a recognizable sequence:

- A real event occurs.

- Initial reporting frames the event in simplified terms.

- Emotionally charged interpretations amplify the story.

- Users rapidly repost the information without verification.

- Speculative narratives accumulate around the original claim.

- Visual content reinforces the narrative’s apparent credibility.

- The story stabilizes as a widely accepted, but inaccurate, account.

Importantly, this sequence does not require deliberate fabrication. The most effective misinformation often begins with something that is true. What changes is the interpretation.

Precision as Resilience

The lesson from this episode is not that analysts should dismiss reports of PLA activity or treat social-media reactions as irrelevant. Instead, it highlights the importance of maintaining precision in the way military developments are described and interpreted. In the Taiwan information environment, the difference between “increased PLA activity” and “Taiwan is surrounded” is not a matter of rhetorical nuance. It represents the boundary between analysis and alarmism.

Once that boundary is crossed, the information environment becomes difficult to correct. Viral narratives spread faster than careful explanations, and emotionally compelling interpretations often outcompete technical accuracy.

Precision therefore becomes a form of resilience. Analysts, journalists, and policymakers who communicate clearly about military developments help prevent routine operational activity from being misinterpreted as strategic escalation.

The recent surge of misinformation surrounding the Taiwan activity report demonstrates how quickly ambiguity can be converted into certainty online. It also serves as a reminder that in crisis-prone environments, the first casualty is often not truth itself, but proportion.

[i] https://www.politico.com/news/2026/03/15/taiwan-reports-large-scale-chinese-military-aircraft-presence-near-island-00829219

[ii] https://x.com/unusual_whales/status/2033235867616383090?s=20

[iii] https://tsm.schar.gmu.edu/justice-mission-2025-the-narrative-battle-inside-chinas-latest-taiwan-exercise/

[iv] https://www.mnd.gov.tw/en/news/plaact/86327

[v] https://www.politico.com/news/2026/03/15/taiwan-reports-large-scale-chinese-military-aircraft-presence-near-island-00829219?utm_medium=twitter&utm_source=dlvr.it

[vi] https://www.mnd.gov.tw/en/news/plaact/86330

[vii] https://www.cnn.com/2026/03/12/asia/china-taiwan-buzzing-mystery-intl-hnk, https://www.bbc.com/zhongwen/articles/c2lr8ejq0w8o/simp

[viii] https://x.com/rawsalerts/status/2033282084282695857?s=20, https://x.com/Defence_Index/status/2033172908277891415?s=20, https://x.com/Globalsurv/status/2033265245582413860?s=20

[ix] https://x.com/SpencerHakimian/status/2033282256962208210?s=20, https://x.com/Globalsurv/status/2033257539291242981?s=20

[x] https://x.com/krassenstein/status/2033284541788324217?s=20, https://x.com/HotSotin/status/2033292605811798043?s=20

[xi] https://x.com/GlobalIJournal/status/2033362180351852786?s=20, https://x.com/drhossamsamy65/status/2033270501947081180?s=20 , https://x.com/PrimeH12995/status/2033572921365647430?s=20

[xii] Brandtzaeg, Petter Bae, and Marika Lüders. “Time collapse in social media: Extending the context collapse.” Social Media+ Society 4, no. 1 (2018): 2056305118763349.

[xiii] Hilberts, Sonya, Mark Govers, Elena Petelos, and Silvia Evers. “The impact of misinformation on social media in the context of natural disasters: Narrative review.” JMIR infodemiology 5 (2025): e70413.

[xiv] Shahbazi, Maryam, and Deborah Bunker. “Social media trust: Fighting misinformation in the time of crisis.” International Journal of Information Management 77 (2024): 102780

[xv] Stieglitz, Stefan, and Linh Dang-Xuan. “Emotions and information diffusion in social media—sentiment of microblogs and sharing behavior.” Journal of management information systems 29, no. 4 (2013): 217-248.

[xvi] Ecker, Ullrich KH, Stephan Lewandowsky, John Cook, Philipp Schmid, Lisa K. Fazio, Nadia Brashier, Panayiota Kendeou, Emily K. Vraga, and Michelle A. Amazeen. “The psychological drivers of misinformation belief and its resistance to correction.” Nature Reviews Psychology 1, no. 1 (2022): 13-29., Marcus, George E., W. Russell Neuman, and Michael MacKuen. Affective intelligence and political judgment. University of Chicago Press, 2000.

[xvii] https://www.bloomberg.com/news/articles/2026-02-10/the-10-trillion-fight-modeling-a-us-china-war-over-taiwan

[xviii] Talwar, Shalini, Amandeep Dhir, Dilraj Singh, Gurnam Singh Virk, and Jari Salo. “Sharing of fake news on social media: Application of the honeycomb framework and the third-person effect hypothesis.” Journal of Retailing and Consumer Services 57 (2020): 102197

[xix] Rama, Daniele, Tiziano Piccardi, Miriam Redi, and Rossano Schifanella. “A large scale study of reader interactions with images on Wikipedia.” EPJ Data Science 11, no. 1 (2022): 1.

[xx] https://x.com/WealthWatcherCo/status/2033280246254895138?s=20